Since 2012, Prof. Dr. Oliver Bendel (FHNW School of Business) has initiated and implemented a wide variety of chatbots and voice assistants. These systems have been covered by the media and have even attracted the interest of NASA. His theoretical foundation and practical expertise stem from his doctoral dissertation on this topic, which dates back a quarter of a century. Since 2022, his focus has been on dialogue systems for dead and endangered languages. This work has resulted in @ve, a chatbot for Latin (implemented by Karim N’Diaye), @llegra, a voice-enabled chatbot for Vallader, a variety of Romansh (implemented by Dalil Jabou), and kAIxo, a voice-enabled chatbot for Basque (implemented by Nicolas Lluis Araya). In addition, Oliver Bendel is experimenting with chatbots for extinct languages such as Egyptian and Akkadian. On April 8, 2026, his article “Chatbots for Dead, Endangered, and Extinct Languages: Possibilities and Limitations of Generative AI for Continuing Education” was published in Wiley Industry News. The article focuses on the question of how chatbots based on generative AI can contribute to the preservation and promotion of dead, endangered, and extinct languages in continuing education, as well as in formal education. Oliver Bendel is also involved in the University of Applied Sciences of the Grisons’ IdiomVoice project, which will be presented on June 17, 2026, at the GRdigital Project Showcase during an evening networking reception. Visitors to the showcase will have the opportunity to explore the current prototype and interact directly in Sursilvan with the two chatbot characters, Lina and Brida. With @llegra, Lina, and Brida, Graubünden has gained several digital ambassadors for its Romansh idioms.

GROUND Workshop in Genoa

The GROUND workshop (advancing GROup UNderstanding and robots’ aDaptive behavior) is back for its third edition and will take place on June 30, 2025, as part of the IAS 19 Conference in Genoa, Italy. This full-day event focuses on robotics in multiparty scenarios and human-robot interaction in group settings. It offers a unique platform for researchers and practitioners to share ideas, explore new approaches, and connect with a passionate community working at the intersection of social interaction and robotics. Participants can look forward to inspiring keynotes and talks by Prof. Dr. Silvia Rossi, Prof. Dr. Oliver Bendel, and Dr. Isabel Neto, along with a hands-on tutorial led by Lorenzo Ferrini. The workshop invites short contributions (2–4 pages plus references) that present innovative strategies for advancing group-robot interaction. Key dates to keep in mind: the submission deadline is May 31, 2025; notifications of acceptance will be sent out on June 17, and the final camera-ready papers are due by June 22. For more information, visit the workshop website, the IAS-19 conference page, or go directly to the submission portal. Questions can be directed to: groundworkshop@gmail.com.

Social Robots in Olten

The elective module “Social Robots” by Prof. Dr. Oliver Bendel took place from November 4 to 6, 2024 at the Olten Campus. Tamara Siegmann (with the online presentation of the paper “Social and Collaborative Robots in Prison”) was invited as a guest speaker. She made it clear to those present that every member of the university can make a contribution to research. On site were Pepper and NAO from the FHNW Robo Labs as well as Unitree Go2, Alpha Mini, Cozmo, Furby, and Booboo (aka Boo Boo) from Oliver Bendel’s privately funded Social Robots Lab. Unitree Go2 – also called Bao (Chinese for “treasure, darling”) by the lecturer – and Booboo were particularly well received. At the end of the elective module, the students designed social robots – also with the help of generative AI – that they found useful, meaningful or simply attractive. The elective modules have been offered since 2021 and are very popular.

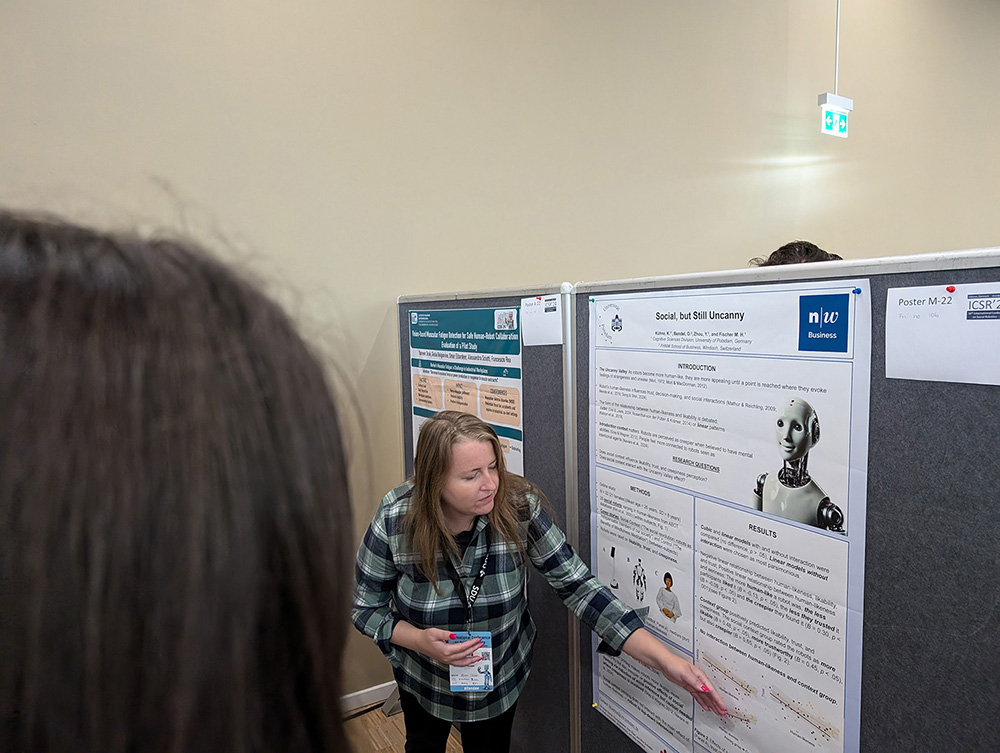

The Uncanny Social Robot

The uncanny valley effect is a famous hypothesis. Whether it can be influenced by context is still unclear. In an online experiment, Katharina Kühne and her co-authors Oliver Bendel, Yuefang Zue, and Martin Fischer found a negative linear relationship between a robot’s human likeness and its likeability and trustworthiness, and a positive linear relationship between a robot’s human likeness and its uncaniness. “Social context priming improved overall likability and trust of robots but did not modulate the Uncanny Valley effect.” (Abstract) Katharina Kühne outlined these conclusions in her presentation “Social, but Still Uncanny” – the title of the paper – at the International Conference on Social Robotics 2024 in Odense, Denmark. Like Yuefang Zue and Martin Fischer, she is a researcher at the University of Potsdam. Oliver Bendel teaches and researches at the FHNW School of Business. Together with Tamara Siegmann, he presented a second paper at the ICSR.

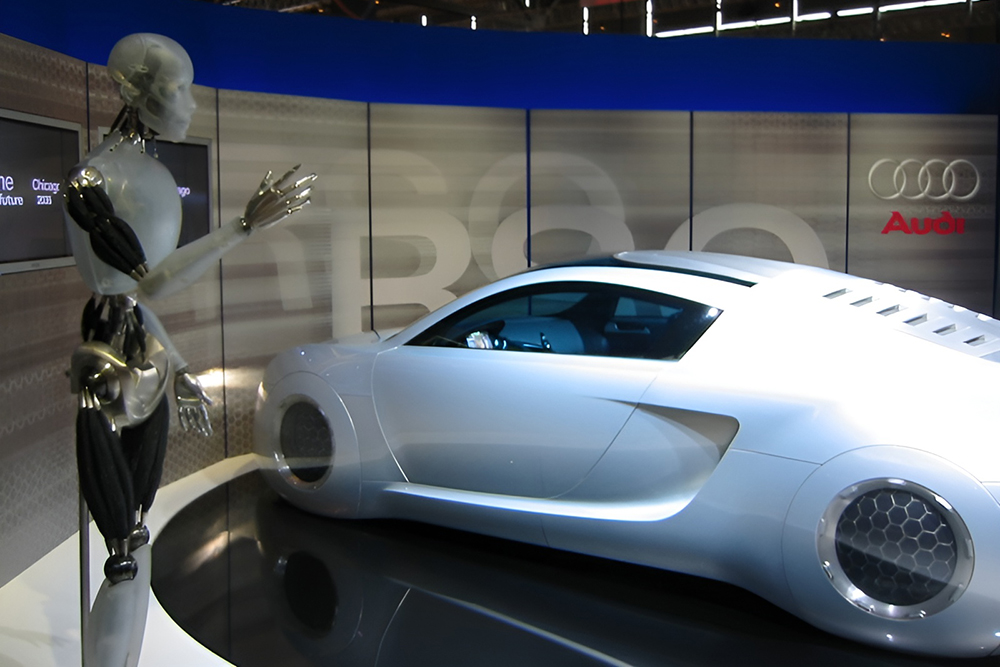

Back to the Future

Elon Musk presented the prototypes of his new Cybercab and his new Robovan in October 2024. In this context, he once again said: “The future should look like the future.” (TechCrunch, 10 October 2024) This is an astonishing statement, because if you know a little about the history of robot and vehicle construction, you know that Elon Musk is orientating himself on ideas that were popular 20 to 80 years ago. Brass, copper, silver, gold, and large, matt or polished surfaces – reminiscent of Elektro (1939) and his animal companion Sparko (1940) as well as futuristic vehicles such as Gil Spear’s Chrysler two-seater (1941). Science fiction and fantasy are also likely to play a role in the design of Tesla and co – think of steampunk and cyberpunk in general, and think of movies like “Metropolis” (1927) and “I, Robot” (2004). Elon Musk generally likes to mix ideas from fiction in his developments, for example the large language model called Grok, which takes its name from “Stranger in a Strange Land” and is intended to fulfil claims formulated in “The Hitchhiker’s Guide to the Galaxy”. TechCrunch also points out the backward-looking nature of the Robovan: “The Robovan has a retro-futuristic look – somewhere between a bus from The Jetsons and a toaster from the 1950s. It features silver metallic sides with black details, and strips of light run-ning parallel to the ground along its sides, with doors that slide out from the middle.” (TechCrunch, 10 October 2024) Robots and robotic vehicles could look very different in the 2020s (Photo: Eirik Newth; cropped by Robophilosophy).

Keynote by Melanie Mitchell

On the third day of Robophilosophy 2024, Melanie Mitchell, Professor at the Santa Fe Institute (USA), gave a keynote speech entitled “AI’s Challenge to Understanding the World”. From the abstract: “I will survey a debate in the artificial intelligence (AI) research community on the extent to which current AI systems can be said to “understand” language and the physical and social situations language encodes. I will describe arguments that have been made for and against such understanding, hypothesize about what humanlike understanding entails, and discuss what methods can be used to fairly evaluate understanding and intelligence in AI systems.” (Website Robophilosophy 2024) In this keynote – as in previous keynotes and presentations – the restrictions of AI and generative AI were emphasized. In response to a question from an audience member, the potential was also acknowledged.

Malicious Attacks Against Autonomous Vehicles

In their paper “Malicious Attacks against Multi-Sensor Fusion in Autonomous Driving”, the authors present the first study on the vulnerability of multi-sensor fusion systems using LiDAR, camera, and radar. “Specifically, we propose a novel attack method that can simultaneously attack all three types of sensing modalities using a single type of adversarial object. The adversarial object can be easily fabricated at low cost, and the proposed attack can be easily performed with high stealthiness and flexibility in practice. Extensive experiments based on a real-world AV testbed show that the proposed attack can continuously hide a target vehicle from the perception system of a victim AV using only two small adversarial objects.” (Abstract) In an article for ICTkommunikation on 6 March 2024, Oliver Bendel presented ten ways in which sensors can be attacked from the outside. On his behalf, M. Hashem Birahjakli investigated further possible attacks on self-driving cars as part of his final thesis in 2020. ‘The results of the work suggest that every 14-year-old girl could disable a self-driving car.’ Boys too, of course, if they have comparable equipment with them (Image: Ideogram).

ICSR + BioMed 2024

In addition to the ICSR in Odense, which focuses on social robotics and artificial intelligence, there is also the ICSR in Naples this year, which organizes a robot competition. In addition, an ICSR conference focusing on biomedicine and the healthcare sector will take place in Singapore from August 16-18, 2024. The website states: “The 16th International Conference on Social Robotics + BioMed (ICSR + BioMed 2024) focuses on interdisciplinary innovation on Bio-inspired, Biomedical, and Surgical Robotics. By fostering the much-needed merging of these disciplines, together with fast emerging Biotech, the conference aims to ensure the lesson learned from these communities blend to unleash the real potential of robots. … The conference will serve as the scientific, technical, and business platform for fostering collaboration, exploration, and advancement in these cutting-edge fields. It will showcase the latest breakthroughs and methodologies, shaping the future of robotics design and applications across several sectors including Biomedical and healthcare.” (Website ICSR) Papers must be submitted by June 5, 2024. Further information on the conference is available at robicon2024.org.

Goodbye Apple Car, Hello GenAI

Apple’s ambitions to enter the automotive business are apparently history. This is reported by Bloomberg. “Apple Inc. is canceling a decadelong effort to build an electric car, according to people with knowledge of the matter, abandoning one of the most ambitious projects in the history of the company.” (Bloomberg, 27 February 2024) Numerous media outlets around the world have picked up the story. The company actually wanted to launch an autonomous electric car on the market. Apple never communicated this publicly, but it was common knowledge. The project as part of the Special Projects Group (SPG) is now to be wound up and the remaining employees are to focus on the area of generative AI in future, where Apple wants to catch up in the coming months. So you could say: goodbye Apple car, hello GenAI.

A Robot Boat to Clean the Rivers

Millions of tons of plastic waste float down polluted urban rivers and industrial waterways and into the world’s oceans every year. According to Microsoft, a Hong Kong-based startup has come up with a solution to help stem these devastating waste flows. “Open Ocean Engineering has developed Clearbot Neo – a sleek AI-enabled robotic boat that autonomously collects tons of floating garbage that otherwise would wash into the Pacific from the territory’s busy harbor.” (Deayton 2023) After a long period of development, its inventors plan to scale up and have fleets of Clearbot Neos cleaning up and protecting waters around the globe. The start-up’s efforts are commendable. However, polluted rivers and harbors are not the only problem. A large proportion of plastic waste comes from the fishing industry. This was proven last year by The Ocean Cleanup project. So there are several places to start: We need to avoid plastic waste. Fishing activities must be reduced. And rivers, lakes and oceans must be cleared of plastic waste (Image: DALL-E 3).