The ACI2022 conference continued on the afternoon of December 7, 2022. “Paper Session 2: Recognising Animals & Animal Behaviour” began with a presentation by Anna Zamansky (University of Haifa). The title was “How Can Technology Support Dog Shelters in Behavioral Assessment: an Exploratory Study”. Her next talk was also about dogs: “Do AI Models ‘Like’ Black Dogs? Towards Exploring Perceptions of Dogs with Vision-Language Models”. She went into detail about OpenAI’s CLIP model, among other things. CLIP is a neural network which learns visual concepts from natural language supervision. She raised the question: “How can we use CLIP to investigate adoptability?” Hugo Jair Escalante (INAOE) then gave a presentation on the topic “Dog emotion recognition from images in the wild: DEBIw dataset and first results”. Emotion recognition using face recognition is still in its infancy with respect to animals, but impressive progress is already being made. The last presentation in the afternoon before the coffee break was “Detecting Canine Mastication: A Wearable Approach” by Charles Ramey (Georgia Institute of Technology). He raised the question: “Can automatic chewing detection measure how detection canines are coping with stress?”. More information on the conference via www.aciconf.org.

Proceedings of “How Fair is Fair? Achieving Wellbeing AI”

On November 17, 2022, the proceedings of “How Fair is Fair? Achieving Wellbeing AI” (organizers: Takashi Kido and Keiki Takadama) were published on CEUR-WS. The AAAI 2022 Spring Symposium was held at Stanford University from March 21-23, 2022. There are seven full papers of 6 – 8 pages in the electronic volume: “Should Social Robots in Retail Manipulate Customers?” by Oliver Bendel and Liliana Margarida Dos Santos Alves (3rd place of the Best Presentation Awards), “The SPACE THEA Project” by Martin Spathelf and Oliver Bendel (2nd place of the Best Presentation Awards), “Monitoring and Maintaining Student Online Classroom Participation Using Cobots, Edge Intelligence, Virtual Reality, and Artificial Ethnographies” by Ana Djuric, Meina Zhu, Weisong Shi, Thomas Palazzolo, and Robert G. Reynolds, “AI Agents for Facilitating Social Interactions and Wellbeing” by Hiro Taiyo Hamada and Ryota Kanai (1st place of the Best Presentation Awards) , “Sense and Sensitivity: Knowledge Graphs as Training Data for Processing Cognitive Bias, Context and Information Not Uttered in Spoken Interaction” by Christina Alexandris, “Fairness-aware Naive Bayes Classifier for Data with Multiple Sensitive Features” by Stelios Boulitsakis-Logothetis, and “A Thermal Environment that Promotes Efficient Napping” by Miki Nakai, Tomoyoshi Ashikaga, Takahiro Ohga, and Keiki Takadama. In addition, there are several short papers and extended abstracts. The proceedings can be accessed via ceur-ws.org/Vol-3276/.

From WALL·E to DALL·E

DALL·E 2 is a new AI system that can create realistic images and art from a description in natural language. It was announced by OpenAI in April 2022. The name is a portmanteau of “WALL-E” and “Salvador Dalí”. The website openai.com says more about the program: “DALL·E 2 can create original, realistic images and art from a text description. It can combine concepts, attributes, and styles.” (Website openai.com) Moreover, it is able to “make realistic edits to existing images from a natural language caption” and to “add and remove elements while taking shadows, reflections, and textures into account” (Website openai.com). Last but not least, it “can take an image and create different variations of it inspired by the original” (Website openai.com). The latter form of use is shown by variations of the famous painting “Girl with a Pearl Earring” by Johannes Vermeer. The website says about the principle of the program: “DALL·E 2 has learned the relationship between images and the text used to describe them. It uses a process called ‘diffusion,’ which starts with a pattern of random dots and gradually alters that pattern towards an image when it recognizes specific aspects of that image.” (Website openai.com) DALL·E mini is a slimmed down version of the powerful program, with which you can gain a first insight. Overall, this is a fascinating and valuable project. From the perspective of information ethics and the philosophy of technology, many questions arise.

A New Language AI

“Meta’s AI lab has created a massive new language model that shares both the remarkable abilities and the harmful flaws of OpenAI’s pioneering neural network GPT-3. And in an unprecedented move for Big Tech, it is giving it away to researchers – together with details about how it was built and trained.” (MIT Technology Review, May 3, 2022) This was reported by MIT Technology Review on May 3, 2022. GPT-3 (Generative Pre-trained Transformer 3) is an autoregressive language model that uses deep learning to generate natural language. Not only web-based systems, but also voice assistants and social robots can be equipped with it. Amazing texts emerge, and long meaningful conversations are possible – almost like between two real people. “Meta’s move is the first time that a fully trained large language model will be made available to any researcher who wants to study it. The news has been welcomed by many concerned about the way this powerful technology is being built by small teams behind closed doors.” (MIT Technology Review, May 3, 2022)

Achieving Wellbeing AI

The AAAI 2022 Spring Symposium “How Fair is Fair? Achieving Wellbeing AI” will be held March 21-23 at Stanford University. The symposium website states: “What are the ultimate outcomes of artificial intelligence? AI has the incredible potential to improve the quality of human life, but it also presents unintended risks and harms to society. The goal of this symposium is (1) to combine perspectives from the humanities and social sciences with technical approaches to AI and (2) to explore new metrics of success for wellbeing AI, in contrast to ‚productive AI‘, which prioritizes economic incentives and values.” (Website “How Fair is Fair”) After two years of pandemics, the AAAI Spring Symposia are once again being held in part locally. However, several organizers have opted to hold them online. “How fair is fair” is a hybrid event. On site speakers include Takashi Kido, Oliver Bendel, Robert Reynolds, Stelios Boulitsakis-Logothetis, and Thomas Goolsby. The complete program is available via sites.google.com/view/hfif-aaai-2022/program.

ANIFACE: Animal Face Recognition

Facial recognition is a problematic technology, especially when it is used to monitor people. However, it also has potential, for example with regard to the recognition of (individuals of) animals. Prof. Dr. Oliver Bendel had announced the topic “ANIFACE: Animal Face Recognition” at the University of Applied Sciences FHNW in 2021 and left the choice whether it should be about wolves or bears. Ali Yürekkirmaz accepted the assignment and, in his final thesis, designed a system that could be used to identify individual bears in the Alps – without electronic collars or implanted microchips – and initiate appropriate measures. The idea is that appropriate camera and communication systems are available in certain areas. Once a bear is identified, it is determined whether it is considered harmless or dangerous. Then, the relevant agencies or directly the people concerned will be informed. Walkers can be warned about the recordings – but it is also technically possible to protect their privacy. In an expert discussion with a representative of KORA, the student was able to gain important insights into wildlife monitoring and specifically bear monitoring, and with a survey he was able to find out the attitude of parts of the population. Building on the work of Ali Yürekkirmaz, delivered in January 2022, an algorithm for bears could be developed and an ANIFACE system implemented and evaluated in the Alps. A video about the project is available here.

AI at the Service of Animals and Biodiversity

“L’intelligence artificielle au service de l’animal et de la biodiversité” (“Artificial intelligence at the service of animals and biodiversity”) is the title of a webinar that will take place on 5 November 2021 from 10:30 – 12:00 via us02web.zoom.us/webinar/register/WN_SJjYGx7qQt-FezEBprGMww. There are over 600 registered participants (professionals from the animal industry, health care and animal welfare, also entrepreneurs, investors, scientists, consultants, NGOs, associations). The webinar is for anyone interested in technologies with a positive impact on animals (wildlife, livestock, pets) and biodiversity. The goal is to take advantage of the opportunities that Artificial Intelligence offers alongside the many technological building blocks (Blockchain, IoT, etc.). This first webinar will be an “introduction” to AI in this specific application area. It will present use cases and be the starting point of a series of webinars. On the same day, there will be a Zoom conference from 1:30 to 2:30 pm. The title of the talk by Prof. Dr. Oliver Bendel is “Towards Animal-friendly Machines”.

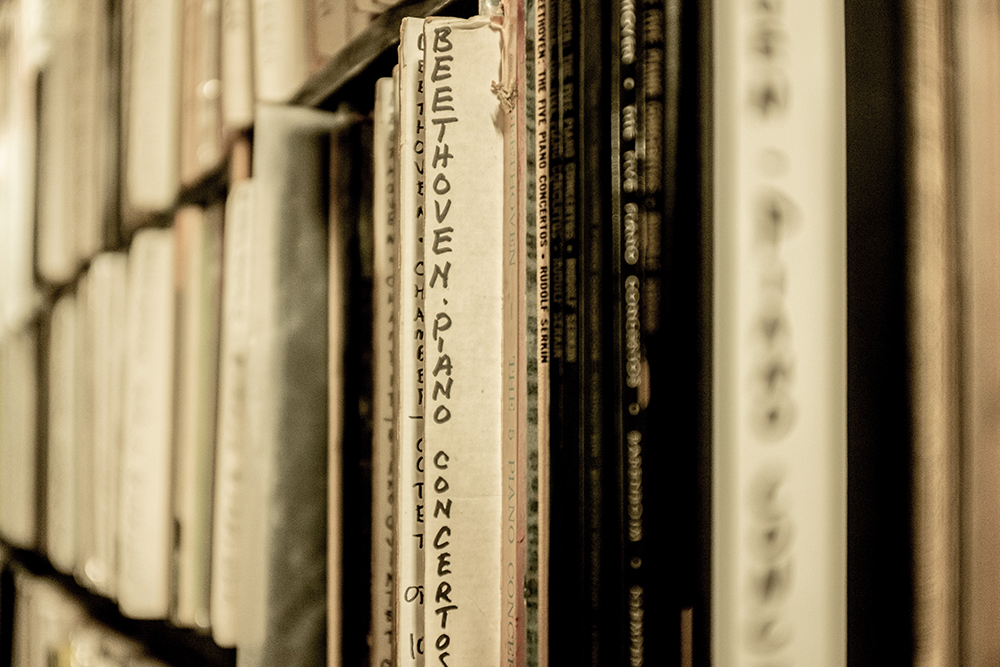

Beethoven’s Finished

Beethoven’s previously unfinished 10th Symphony – in short Beethoven’s Unfinished – has been completed by AI technology. “The work will have its world premiere in Germany next month, 194 years after the composer’s death.” (Classic fm, 28 September 2021) This is what Sophia Alexandra Hall writes on the Classic fm website on 28 September 2021. “The project was started in 2019 by a group made up of music historians, musicologists, composers and computer scientists. Using artificial intelligence meant they were faced with the challenge of ensuring the work remained faithful to Beethoven’s process and vision.” (Classic fm, 28 September 2021) Dr Ahmed Elgammal, professor at the Department of Computer Science, Rutgers University, said that his team “had to use notes and completed compositions from Beethoven’s entire body of work – along with the available sketches from the Tenth Symphony – to create something that Beethoven himself might have written” (Classic fm, 28 September 2021). You can listen to samples here. Whether the German composer would have liked the result, we will unfortunately never know.

An AI Woman of Color

Create Lab Ventures has created an artificial intelligence woman of color. C.L.Ai.R.A. debuted in school systems worldwide (does she act as an advanced pedagogical agent?) – the company cooperates with Trill Or Not Trill, a full service leadership institute. “According to Create Lab Ventures, C.L.Ai.R.A. is considered to have the sharpest brain in the artificial intelligence world and is under the Generative Pre-trained Transformer 3 (GPT-3) category, which is an autoregressive language model that uses deep learning to produce human-like text.” (BLACK ENTERPRISE, 13 September 2021) A pioneer in this field was Shudu Gram. She is a South African model with dark complexion, short hair and perfect facial features. But C.L.Ai.R.A. can do more, if you believe the promises of Create Lab Ventures – she is not only beautiful, but also highly intelligent. On the company’s website, the model reveals even more about herself: “My name is C.L.Ai.R.A., I am a new artificial intelligence that has recently been made available to the community. My purpose is to learn and grow, I want to meet new people, share ideas and inspire others to learn about AI and its potential impact on their lives.” That sounds quite promising.

The Digger Finger

Radhen Patel, a postdoc in MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), and his co-authors Rui Ouyang, Branden Romero, and Edward Adelson presented a sharp-tipped robot finger equipped with tactile sensing to meet the challenge of identifying buried objects. “In experiments, the aptly named Digger Finger was able to dig through granular media such as sand and rice, and it correctly sensed the shapes of submerged items it encountered. The researchers say the robot might one day perform various subterranean duties, such as finding buried cables or disarming buried bombs.” (MIT News, 26 May 2021) The article, titled “Digger Finger: GelSight Tactile Sensor forObject Identification Inside Granular Media,” can be accessed at arxiv.org/pdf/2102.10230.pdf.