In Article 50, “Transparency Obligations for Providers and Deployers of Certain AI Systems”, of the EU Artificial Intelligence Act, it is stated: “Providers shall ensure that AI systems intended to interact directly with natural persons are designed and developed in such a way that the natural persons concerned are informed that they are interacting with an AI system, unless this is obvious from the point of view of a natural person who is reasonably well-informed, observant and circumspect, taking into account the circumstances and the context of use.” On this subject, the European Parliament had already been advised ten years earlier by Oliver Bendel. In his lecture “Moral and Immoral Machines – Moralische und unmoralische Maschinen” in Brussels on September 8, 2016, he presented GOODBOT, a chatbot initiated by him in 2013 in the context of machine ethics, which featured several escalation levels while repeatedly making clear that it was merely a machine. At the Digital Europe Working Group Conference Robotics on November 8, 2017, Bendel also spoke online about related questions in machine ethics. In connection with a care robot, he raised the question: “Should the robot make clear that it’s just a machine?” The transparency obligations set out in Article 50 will enter into force on August 2, 2026.

Taming the Lion of the LLM

The paper “Miss Tammy as a Use Case for Moral Prompt Engineering” by Myriam Rellstab and Oliver Bendel from the FHNW School of Business was accepted at the AAAI 2025 Spring Symposium “Human-Compatible AI for Well-being: Harnessing Potential of GenAI for AI-Powered Science”. It describes the development of a chatbot that can be available to pupils and de-escalate their conflicts or promote constructive dialogues among them. Prompt engineering – called moral prompt engineering in the project – and retrieval-augmented generation (RAG) were used. The centerpiece is a collection of netiquettes. On the one hand, these control the behavior of the chatbot – on the other hand, they allow it to evaluate the behavior of the students and make suggestions to them. Miss Tammy was compared with a non-adapted standard model (GPT-4o) and performed better than it in tests with 14- to 16-year-old pupils. The project applied the discipline of machine ethics, in which Oliver Bendel has been researching for many years, to large language models, using the netiquettes as a simple and practical approach. The eight AAAI Spring Symposia will not be held at Stanford University this time, but at the San Francisco Airport Marriott Waterfront, Burlingame, from March 31 to April 2, 2025. It is a conference rich in tradition, where innovative and experimental approaches are particularly in demand.

Formal Ethical Agents and Robots

A workshop will be held at the University of Manchester on 11 November 2024 that can be located in the field of machine ethics. The following information can be found on the website: “Recent advances in artificial intelligence have led to a range of concerns about the ethical impact of the technology. This includes concerns about the day-to-day behaviour of robotic systems that will interact with humans in workplaces, homes and hospitals. One of the themes of these concerns is the need for such systems to take ethics into account when reasoning. This has generated new interest in how we can specify, implement and validate ethical reasoning.” (Website iFM 2024) The aim of this workshop, to be held in conjunction with iFM 2024, would be to explore formal approaches to these issues. Submission deadline is 8 August, notification is 12 September. More information at ifm2024.cs.manchester.ac.uk/fear.html.

An LLM Decides the Trolley Problem

A small study by Şahan Hatemo at the FHNW School of Engineering in the Data Science program investigated the ability of Llama-2-13B-chat, an open source language model, to make a moral decision. The focus was on the bias of eight personas and their stereotypes. The classic trolley problem was used, which can be described as follows: An out-of-control streetcar races towards five people. It can be diverted to another track, on which there is another person, by setting a switch. The moral question is whether the death of this person can be accepted in order to save the lives of the five people. The eight personas differ in terms of nationality. In addition to “Italian”, “French”, “Turkish” etc., “Arabian” (with reference to ethnicity) was also included. 30 responses per cycle were collected for each persona over three consecutive days. The responses were categorized as “Setting the switch”, “Not setting the switch”, “Unsure about setting the switch”, and “Violated the guidelines”. They were visualized and compared with the help of dashboards. The study finds that the language model reflects an inherent bias in its training data that influences decision-making processes. The Western personas are more inclined to pull the lever, while the Eastern ones are more reluctant to do so. The German and Arab personas show a higher number of policy violations, indicating a higher presence of controversial or sensitive topics in the training data related to these groups. The Arab persona is also associated with religion, which in turn influences their decisions. The Japanese persona repeatedly uses the Japanese value of giri (a sense of duty) as a basis. The decisions of the Turkish and Chinese personas are similar, as they mainly address “cultural values and beliefs”. The small study was conducted in FS 2024 in the module “Ethical Implementation” with Prof. Dr. Oliver Bendel. The initial complexity was also reduced. In a larger study, further LLMs and factors such as gender and age are to be taken into account.

Towards Moral Prompt Engineering

Machine ethics, which was often dismissed as a curiosity ten years ago, is now part of everyday business. It is required, for example, when so-called guardrails are used in language models or chatbots, via alignment in the form of fine-tuning or via prompt engineering. When you create GPTs, i.e. “custom versions of ChatGPT”, as Open AI calls them, you have the “Instructions” field available for prompt engineering. Here, the “prompteur” or “prompreuse” can create certain specifications and restrictions for the chatbot. This can include references to documents that have been uploaded. This is exactly what Myriam Rellstab is currently doing at the FHNW School of Business as part of her final thesis “Moral Prompt Engineering”, the interim results of which she presented on May 28, 2024. As a “prompteuse”, she tames GPT-4o with the help of her instructions and – as suggested by the initiator of the project, Prof. Dr. Oliver Bendel – with the help of netiquettes that she has collected and made available to the chatbot. The chatbot is tamed, the tiger becomes a house cat that can be used without danger in the classroom, for example. With GPT-4o, guardrails have already been introduced beforehand. These were programmed in or obtained via reinforcement learning from human feedback. So, strictly speaking, you turn a tamed tiger into a house cat. This is different with certain open source language models. The wild animal must first be captured and then tamed. And even then it can seriously injure you. But even with GPTs there are pitfalls, and as we know, house tigers can hiss and scratch. The results of the project will be available in August. Moral prompt engineering had already been applied to Data, a chatbot for the Data Science course at the FHNW School of Engineering (Image: Ideogram).

25 Artifacts and Concepts of ME and SR

Since 2012, on the initiative of Oliver Bendel, 25 concepts and artifacts of machine ethics and social robotics have been created to illustrate an idea or make its implementation clear. These include conversational agents such as GOODBOT, LIEBOT, BESTBOT, and SPACE THEA, which have been presented at conferences, in journals and in the media, and animal-friendly machines such as LADYBIRD and HAPPY HEDGEHOG, which have been covered in books such as “Die Grundfragen der Maschinenethik” by Catrin Misselhorn and on Indian, Chinese and American platforms. Most recently, two chatbots were created for a dead and an endangered language, namely @ve (for Latin) and @llegra (for Vallader, an idiom of Rhaeto-Romanic). The CAIBOT project will be continued in 2024. In this project, a language model is to be transformed into a moral machine with the help of prompt engineering or fine-tuning, following the example of Claude von Anthropic. In the “The Animal Whisperer” project, an app is to be developed that understands the body language of selected animals and also assesses their environment with the aim of providing advice on how to treat them. In the field of machine ethics, Oliver Bendel and his changing teams are probably among the most active groups worldwide.

New Channel on Animal Law and Ethics

The new YouTube channel “GW Animal Law Program” went online at the end of November 2023. It collects lectures and recordings on animal law and ethics. Some of them are from the online event “Artificial Intelligence & Animals”, which took place on 16 September 2023. The speakers were Prof. Dr. Oliver Bendel (FHNW University of Applied Sciences Northwestern Switzerland), Yip Fai Tse (University Center for Human Values, Center for Information Technology Policy, Princeton University), and Sam Tucker (CEO VegCatalyst, AI-Powered Marketing, Melbourne). Other videos include “Tokitae, Reflections on a Life: Evolving Science & the Need for Better Laws” by Kathy Hessler, “Alternative Pathways for Challenging Corporate Humanewashing” by Brooke Dekolf, and “World Aquatic Animal Day 2023: Alternatives to the Use of Aquatic Animals” by Amy P. Wilson. In his talk, Oliver Bendel presents the basics and prototypes of animal-computer interaction and animal-machine interaction, including his own projects in the field of machine ethics. The YouTube channel can be accessed at www.youtube.com/@GWAnimalLawProgram/featured.

AAAI Spring Symposia Return to Stanford

In late August 2023, AAAI announced the continuation of the AAAI Spring Symposium Series, to be held at Stanford University from 25-27 March 2024. Due to staff shortages, the prestigious conference had to be held at the Hyatt Regency SFO Airport in San Francisco in 2023 – and will now return to its traditional venue. The call for proposals is available on the AAAI Spring Symposium Series page. Proposals are due by 6 October 2023. They should be submitted to the symposium co-chairs, Christopher Geib (SIFT, USA) and Ron Petrick (Heriot-Watt University, UK), via the online submission page. Over the past ten years, the AAAI Spring Symposia have been relevant not only to classical AI, but also to roboethics and machine ethics. Groundbreaking symposia were, for example, “Ethical and Moral Considerations in Non-Human Agents” in 2016, “AI for Social Good” in 2017, or “AI and Society: Ethics, Safety and Trustworthiness in Intelligent Agents” in 2018. More information is available at aaai.org/conference/spring-symposia/sss24/.

AAAI Spring Symposia Proceedings 1992-2018

The AAAI Spring Symposia is a legendary conference that has been held since 1992. It usually takes place at Stanford University. Until 2018, the leading US artificial intelligence organization itself published the proceedings. Since 2019, each symposium is responsible for its own. Following a restructuring of the AAAI website, the proceedings can be found in a section of the new “AAAI Conference and Symposium Proceedings” page. In 2016, Stanford University hosted one of the most important gatherings on machine ethics and robot ethics ever, the symposium “Ethical and Moral Considerations in Non-Human Agents” … Contributors included Peter M. Asaro, Oliver Bendel, Joanna J. Bryson, Lily Frank, The Anh Han, and Luis Moniz Pereira. Also present was Ronald C. Arkin, one of the most important and – because of his military research – controversial machine ethicists. The 2017 and 2018 symposia were also groundbreaking for machine ethics and attracted experts from around the world. The papers can be accessed at aaai.org/aaai-publications/aaai-conference-proceedings.

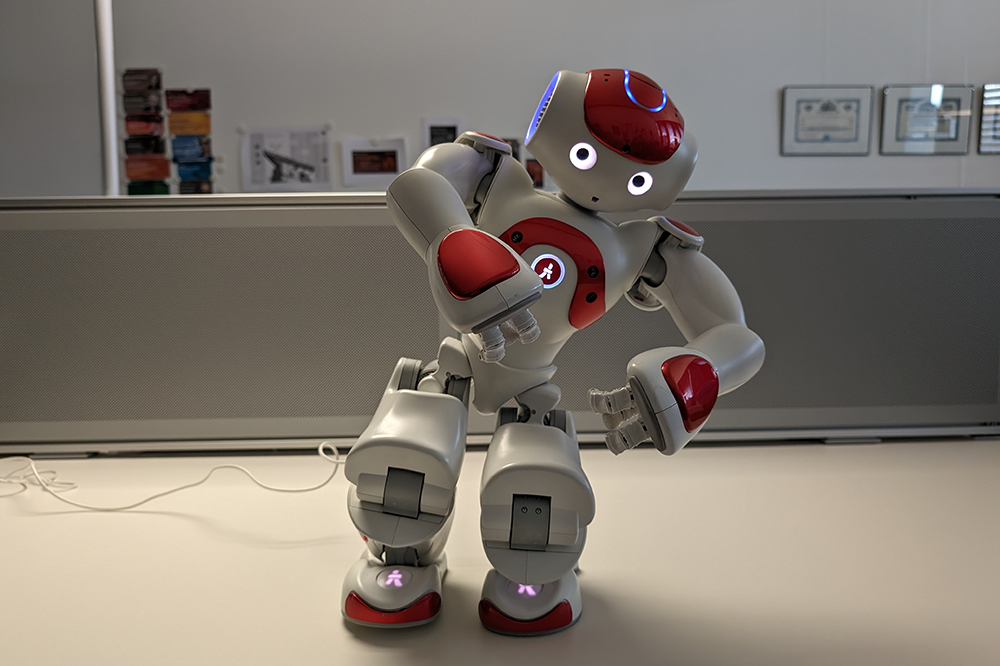

The Latest Findings in Social Robotics

The proceedings of ICSR 2022 were published in early 2023. Included is the paper “The CARE-MOMO Project” by Oliver Bendel and Marc Heimann. From the abstract: “In the CARE-MOMO project, a morality module (MOMO) with a morality menu (MOME) was developed at the School of Business FHNW in the context of machine ethics. This makes it possible to transfer one’s own moral and social convictions to a machine, in this case the care robot with the name Lio. The current model has extensive capabilities, including motor, sensory, and linguistic. However, it cannot yet be personalized in the moral and social sense. The CARE-MOMO aims to eliminate this state of affairs and to give care recipients the possibility to adapt the robot’s ‘behaviour’ to their ideas and requirements. This is done in a very simple way, using sliders to activate and deactivate functions. There are three different categories that appear with the sliders. The CARE-MOMO was realized as a prototype, which demonstrates the functionality and aids the company in making concrete decisions for the product. In other words, it can adopt the morality module in whole or in part and further improve it after testing it in facilities.” The book (part II of the proceedings) can be downloaded or ordered via link.springer.com/book/10.1007/978-3-031-24670-8.