The Centers for Disease Control and Prevention of the United States Department of Health and Human Services have launched a chatbot that will help people decide what to do if they have potential Coronavirus symptoms such as fever, cough, or shortness of breath. This was reported by the magazine MIT Technology Review on 24 March 2020. “The hope is the self-checker bot will act as a form of triage for increasingly strained health-care services.” (MIT Technology Review, 24 March 2020) According to the magazine, the chatbot asks users questions about their age, gender, and location, and about any symptoms they’re experiencing. It also inquires whether they may have met someone diagnosed with COVID-19. On the basis of the users’ replies, it recommends the best next step. “The bot is not supposed to replace assessment by a doctor and isn’t intended to be used for diagnosis or treatment purposes, but it could help figure out who most urgently needs medical attention and relieve some of the pressure on hospitals.” (MIT Technology Review, 24 March 2020) The service is intended for people who are currently located in the US. International research is being done not only on useful but also on moral chatbots.

The Old, New Neons

The company Neon picks up an old concept with its Neons, namely that of avatars. Twenty years ago, Oliver Bendel distinguished between two different types in the Lexikon der Wirtschaftsinformatik. With reference to the second, he wrote: “Avatars, on the other hand, can represent any figure with certain functions. Such avatars appear on the Internet – for example as customer advisors and newsreaders – or populate the adventure worlds of computer games as game partners and opponents. They often have an anthropomorphic appearance and independent behaviour or even real characters …” (Lexikon der Wirtschaftsinformatik, 2001, own translation) It is precisely this type that the company, which is part of the Samsung Group and was founded by Pranav Mistry, is now adapting, taking advantage of today’s possibilities. “These are virtual figures that are generated entirely on the computer and are supposed to react autonomously in real time; Mistry spoke of a latency of less than 20 milliseconds.” (Heise Online, 8 January 2019, own translation) The neons are supposed to show emotions (as do some social robots that are conquering the market) and thus facilitate and strengthen bonds. “The AI-driven character is neither a language assistant a la Bixby nor an interface to the Internet. Instead, it is a friend who can speak several languages, learn new skills and connect to other services, Mistry explained at CES.” (Heise Online, 8 January 2019, own translation)

AI Workshop at the University of Potsdam

In 2018, Dr. Yuefang Zhou and Prof. Dr. Martin Fischer initiated the first international workshop on intimate human-robot relations at the University of Potsdam, “which resulted in the publication of an edited book on developments in human-robot intimate relationships”. This year, Prof. Dr. Martin Fischer, Prof. Dr. Rebecca Lazarides, and Dr. Yuefang Zhou are organizing the second edition. “As interest in the topic of humanoid AI continues to grow, the scope of the workshop has widened. During this year’s workshop, international experts from a variety of different disciplines will share their insights on motivational, social and cognitive aspects of learning, with a focus on humanoid intelligent tutoring systems and social learning companions/robots.” (Website Embracing AI) The international workshop “Learning from Humanoid AI: Motivational, Social & Cognitive Perspectives” will take place on 29 and 30 November 2019 at the University of Potsdam. Keynote speakers are Prof. Dr. Tony Belpaeme, Prof. Dr. Oliver Bendel, Prof. Dr. Angelo Cangelosi, Dr. Gabriella Cortellessa, Dr. Kate Devlin, Prof. Dr. Verena Hafner, Dr. Nicolas Spatola, Dr. Jessica Szczuka, and Prof. Dr. Agnieszka Wykowska. Further information is available at embracingai.wordpress.com/.

Talk to Transformer

Artificial intelligence is spreading into more and more application areas. American scientists have now developed a system that can supplement texts: “Talk to Transformer”. The user enters a few sentences – and the AI system adds further passages. “The system is based on a method called DeepQA, which is based on the observation of patterns in the data. This method has its limitations, however, and the system is only effective for data on the order of 2 million words, according to a recent news article. For instance, researchers say that the system cannot cope with the large amounts of data from an academic paper. Researchers have also been unable to use this method to augment texts from academic sources. As a result, DeepQA will have limited application, according to the researchers. The scientists also note that there are more applications available in the field of text augmentation, such as automatic transcription, the ability to translate text from one language to another and to translate text into other languages.” The sentences in quotation marks are not from the author of this blog. They were written by the AI system itself. You can try it via talktotransformer.com.

Honey, I shrunk the AI

Some months ago, researchers at the University of Massachusetts showed the climate toll of machine learning, especially deep learning. Training Google’s BERT, with its 340 million data parameters, emitted nearly as much carbon as a round-trip flight between the East and West coasts. According to Technology Review, the trend could also accelerate the concentration of AI research into the hands of a few big tech companies. “Under-resourced labs in academia or countries with fewer resources simply don’t have the means to use or develop such computationally expensive models.” (Technology Review, 4 October 2019) In response, some researchers are focused on shrinking the size of existing models without losing their capabilities. The magazine wrote enthusiastically: “Honey, I shrunk the AI” (Technology Review, 4 October 2019) There are advantages not only with regard to the environment and to the access to state-of-the-art AI. According to Technology Review, tiny models will help bring the latest AI advancements to consumer devices. “They avoid the need to send consumer data to the cloud, which improves both speed and privacy. For natural-language models specifically, more powerful text prediction and language generation could improve myriad applications like autocomplete on your phone and voice assistants like Alexa and Google Assistant.” (Technology Review, 4 October 2019)

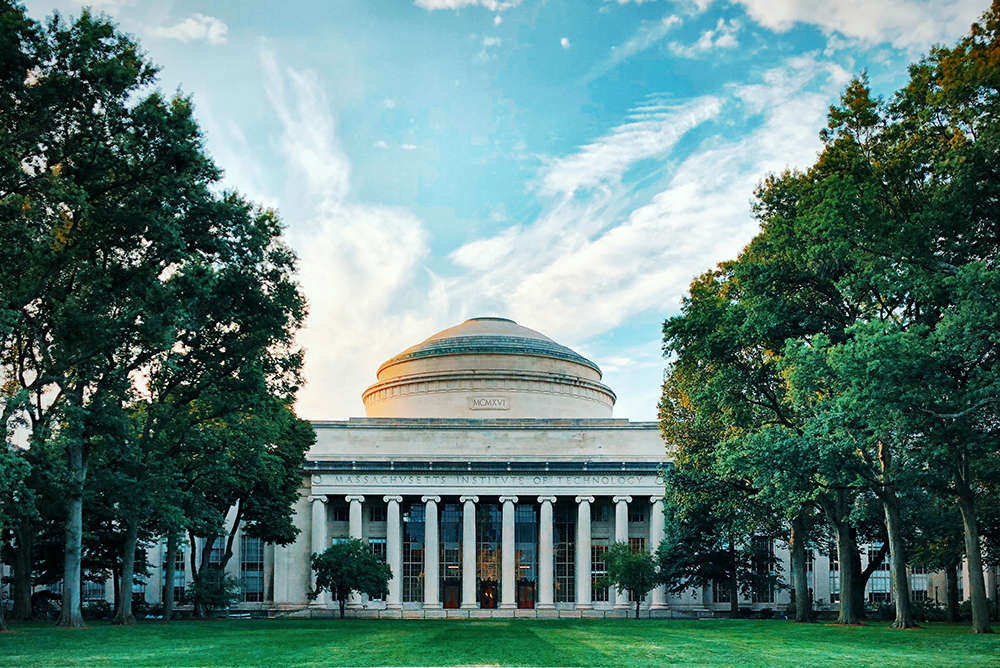

Ethics in AI for Kids and Teens

In summer 2019, Blakeley Payne ran a very special course at MIT. According to an article in Quartz magazine, the graduate student had created an AI ethics curriculum to make kids and teens aware of how AI systems mediate their everyday lives. “By starting early, she hopes the kids will become more conscious of how AI is designed and how it can manipulate them. These lessons also help prepare them for the jobs of the future, and potentially become AI designers rather than just consumers.” (Quartz, 4 September 2019) Not everyone is convinced that artificial intelligence is the right topic for kids and teens. “Some argue that developing kindness, citizenship, or even a foreign language might serve students better than learning AI systems that could be outdated by the time they graduate. But Payne sees middle school as a unique time to start kids understanding the world they live in: it’s around ages 10 to 14 year that kids start to experience higher-level thoughts and deal with complex moral reasoning. And most of them have smartphones loaded with all sorts of AI.” (Quartz, 4 September 2019) There is no doubt that the MIT course could be a role model for schools around the world. The renowned university once again seems to be setting new standards.

Fighting Deepfakes with Deepfakes

A deepfake (or deep fake) is a picture or video created with the help of artificial intelligence that looks authentic but is not. Also the methods and techniques in this context are labeled with the term. Machine learning and especially deep learning are used. With deepfakes one wants to create objects of art and visual objects or means for discreditation, manipulation and propaganda. Politics and pornography are therefore closely interwoven with the phenomenon. According to Futurism, Facebook is teaming up with a consortium of Microsoft researchers and several prominent universities for a “Deepfake Detection Challenge”. “The idea is to build a data set, with the help of human user input, that’ll help neural networks detect what is and isn’t a deepfake. The end result, if all goes well, will be a system that can reliably fake videos online. Similar data sets already exist for object or speech recognition, but there isn’t one specifically made for detecting deepfakes yet.” (Futurism, 5 September 2019) The winning team will get a prize – presumably a higher sum of money. Facebook is investing a total of 10 million dollars in the competition.

An AI System for Multiple-choice Tests

According to the New York Times, the Allen Institute for Artificial Intelligence unveiled a new system that correctly answered more than 90 percent of the questions on an eighth-grade science test and more than 80 percent on a 12th-grade exam. Is it really a breakthrough for AI technology, as the title of the article claims? This is a subject of controversy among experts. The newspaper is optimistic: “The system, called Aristo, is an indication that in just the past several months researchers have made significant progress in developing A.I. that can understand languages and mimic the logic and decision-making of humans.” (NYT, 4 September 2019) Aristo was built for multiple-choice tests. “It took standard exams written for students in New York, though the Allen Institute removed all questions that included pictures and diagrams.” (NYT, 4 September 2019) Some questions could be answered by simple information retrieval. There are numerous systems that access Google and Wikipedia, including artifacts of machine ethics like LIEBOT and BESTBOT. But for the answers to other questions logical thinking was required. Perhaps Aristo is helping to abolish multiple-choice tests – not so much because it can solve them, but because they are often not effective.

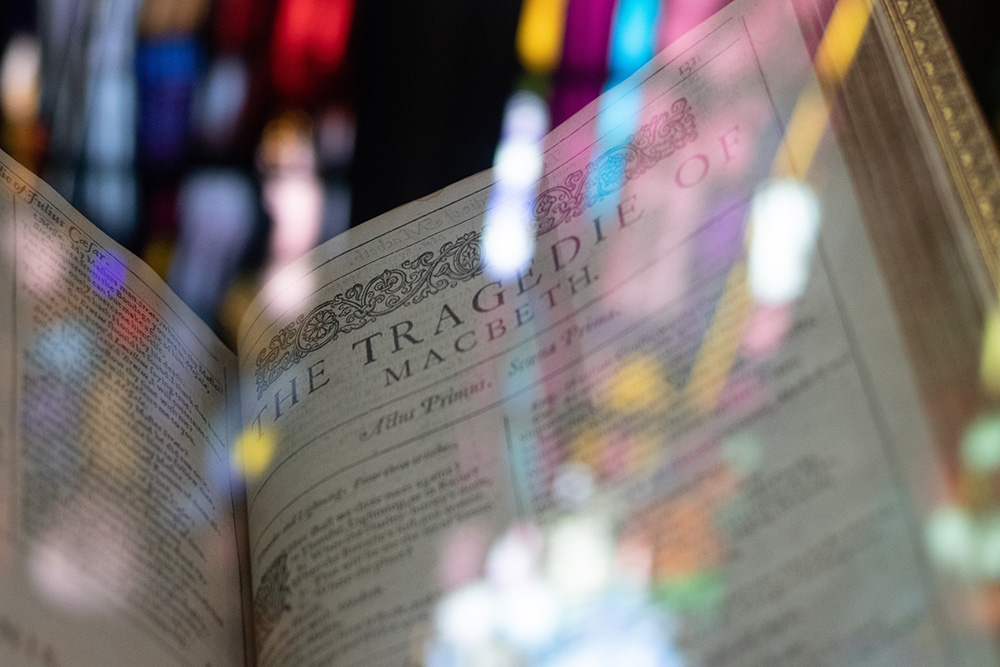

The Resurrection of Dead Authors

lyrikline.org is an interesting and touching project. The writers themselves read their works. You can listen to Ingeborg Bachmann as well as Paul Celan. Spoken literature has been booming in Europe and the USA for about 15 years. The Chinese discovered audio books some time ago. Basically it is time-consuming and expensive to produce such books professionally. Moreover, the authors are not always available. The Chinese search engine Sogou wants to solve this problem with the help of visualization technology and artificial intelligence. It creates avatars of the authors who speak with the voice of the authors. “At the China Online Literature+ conference, the company announced that the first two authors to receive this avatar treatment will be Yue Guan and Bu Xin Tian Shang Diao Xian Bing … If these first AI author avatars are well-received, others could follow – and that could just be the jumping-off point. A company could produce book readings by deceased authors, for example, as long as enough audio and video footage exists. Eventually, they could even incorporate hologram technology to really give bibliophiles the feeling of being at their favorite author’s reading.” (futurism.com, 15 August 2019) So you could have Ingeborg Bachmann or Charles Bukowski read all of their own texts and watch them, many years after their death. Whether the results really sound like the originals, whether they influence us just as emotionally, can be doubted. But there is no doubt that this is an exciting project.

The Relationship between Artificial Intelligence and Machine Ethics

Artificial intelligence has human or animal intelligence as a reference and attempts to represent it in certain aspects. It can also try to deviate from human or animal intelligence, for example by solving problems differently with its systems. Machine ethics is dedicated to machine morality, producing it and investigating it. Whether one likes the concepts and methods of machine ethics or not, one must acknowledge that novel autonomous machines emerge that appear more complete than earlier ones in a certain sense. It is almost surprising that artificial morality did not join artificial intelligence much earlier. Especially machines that simulate human intelligence and human morality for manageable areas of application seem to be a good idea. But what if a superintelligence with a supermorality forms a new species superior to ours? That’s science fiction, of course. But also something that some scientists want to achieve. Basically, it’s important to clarify terms and explain their connections. This is done in a graphics that was published in July 2019 on informationsethik.net and is linked here.